Resurrect an old smartphone

Everyone of us has probably old smartphones somewhere and some of them would work with a reasonably current Android. My previous previous smartphone was a OnePlus 3, which has been sitting in the drawer for the past few years. I already unlocked it back in the days and installed LineageOS (Version 15.1 - Android 8.1). The current Version for the OnePlus 3 is LineageOS 18.1 (which is not the current Android, but good enough). An update from 15.1 to 18.1 without deleting everything on the phone is not easily possible so I will install 18.1 via recovery. The LineageOS website has a step by step guide which I only need half of because the phone is already unlocked.

Disclaimer: I am doing this with an old phone I had in a drawer for years. If you don't know what you are doing, don't ever do this with a phone you rely on.

I used adb on ArchLinux to sideload everything.

After starting the phone in fastboot mode, I checked if the device is found from my Linux PC:

$ fastboot devices 2b7f3166 fastboot

Yay.

Meanwhile: updating the radio/modem

I tried to install the new LineageOS version as described below, but the radio/modem was too old. So I needed to update this first.

First I installed the current version of twrp, because I already installed LineageOS recovery: Instructions and Download. Now install recovery (the phone needs to be in the bootloader):

$ fastboot flash recovery twrp-3.7.0_9-0-oneplus3.img Sending 'recovery' (29821 KB) OKAY [ 1.011s] Writing 'recovery' OKAY [ 0.216s] Finished. Total time: 1.271s

Next start the twrp mode by booting the phone into recovery.

I got the radio from the official OnePlus website included in the final update of OxygenOS 9: OnePlus3Oxygen_16_OTA_086_all_1911042118_997006918a894202.zip.

Additionally I got the radio+modem from this xda-forum link.

The files inside are the same, but without the boot image.

So installing the xda-forum zip file via sideload updates only the relevant parts:

$ adb sideload Stable9.0.6\ Firmware+Modem_OnePlus3.zip serving: 'Stable9.0.6 Firmware+Modem_OnePlus3.zip' (~51%) adb: failed to read command: Success

The message on the phone was that it was successful.

Updating to Android 11 (LineageOS 18.1)

Now back to the actual update.

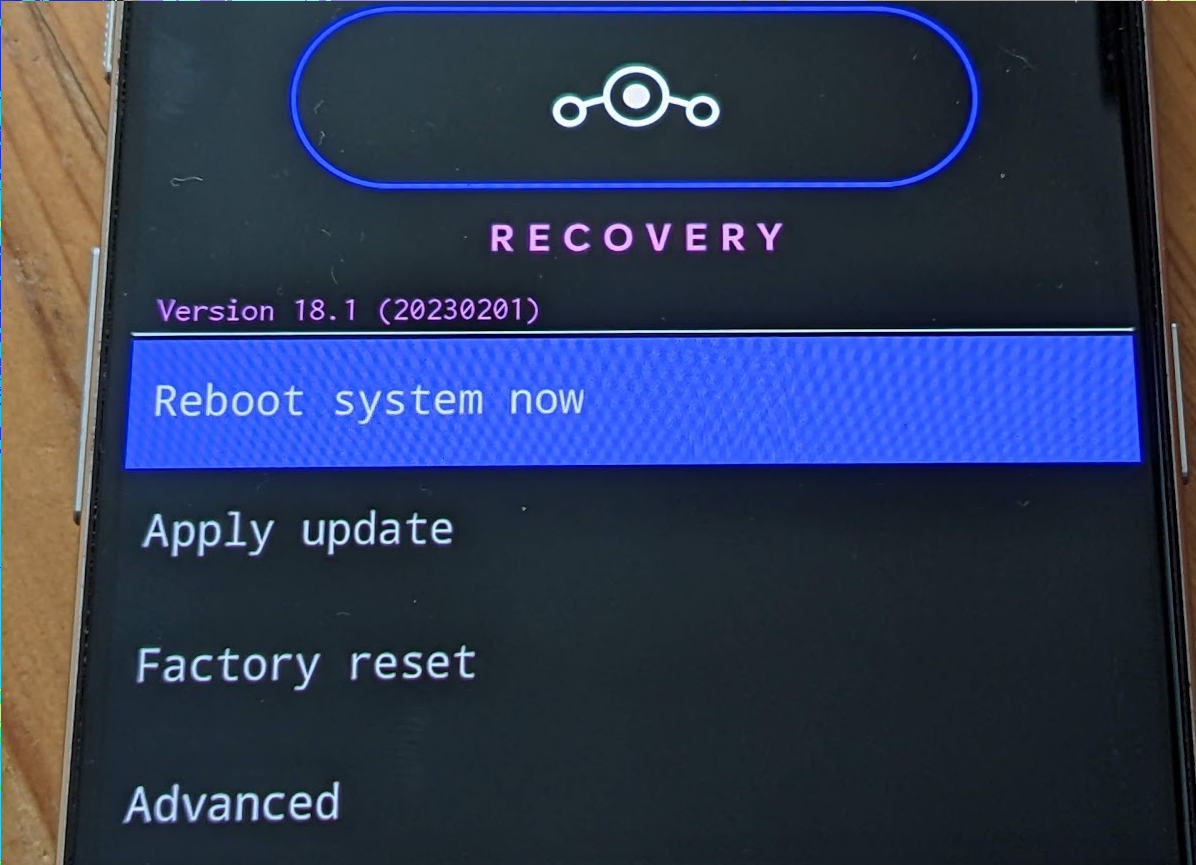

Maybe I could use twrp for this too, but I wanted to use the LineageOS recovery image. I downloaded it from this page: https://download.lineageos.org/oneplus3

Flashed it:

$ fastboot flash recovery lineage-18.1-20230201-recovery-oneplus3.img Sending 'recovery' (19361 KB) OKAY [ 0.632s] Writing 'recovery' OKAY [ 0.148s] Finished. Total time: 0.827s

For the next step I downloaded the correct LineageOS version for my phone: lineage-18.1-20230201-nightly-oneplus3-signed.zip.

And the Google Apps package for Android 11.0 arm64: MindTheGapps-11.0.0-arm64-20220217_100228.zip.

In recovery then "Factory reset", then "Format data/factory reset". Be aware that everything on the phone will be deleted! For me this is a phone I haven't touched in years, so nothing relevant is on there.

Next step is "Apply update", "Apply from ADB". The instructions how to do this are on the phone screen:

$ adb sideload lineage-18.1-20230201-nightly-oneplus3-signed.zip Total xfer: 1.00x

The radio/modem to old error was: "Modem firmware from OxygenOS 9.0.2 or newer stock ROMs is prerequisite to be compatible with this build." Now everything works, because the updated radio/modem already happened.

And next the Google apps:

$ adb sideload MindTheGapps-11.0.0-arm64-20220217_100228.zip Total xfer: 1.00x

The signature is always invalid. So accepting this is "fine".

Now the phone can be rebooted and boots Android 11 (LineageOS 18.1). 🥳