cress.space final words

In 2016 we participated in the NASA Spaceappschallenge. Our team wanted to grow cress as automated as possible.

Our mission statement was:

setup a demonstrator green-house

autonomous farming, through machine learning

add a gaming part for users to help nurturing the plants

We didn't implement the last part. But the first two were successful.

Hardware

On the first spaceappschallenge weekend we build our first planting box:

On the following weekends we improved the first box a lot. We used a Raspberry PI camera to shot a photo from above every five minutes:

After a few months we started to build a second and later a third box. Every iteration was better because we learned from previous versions.

The boxes are build by cutting IKEA Samla boxes. They are cheap and easy to operate on.

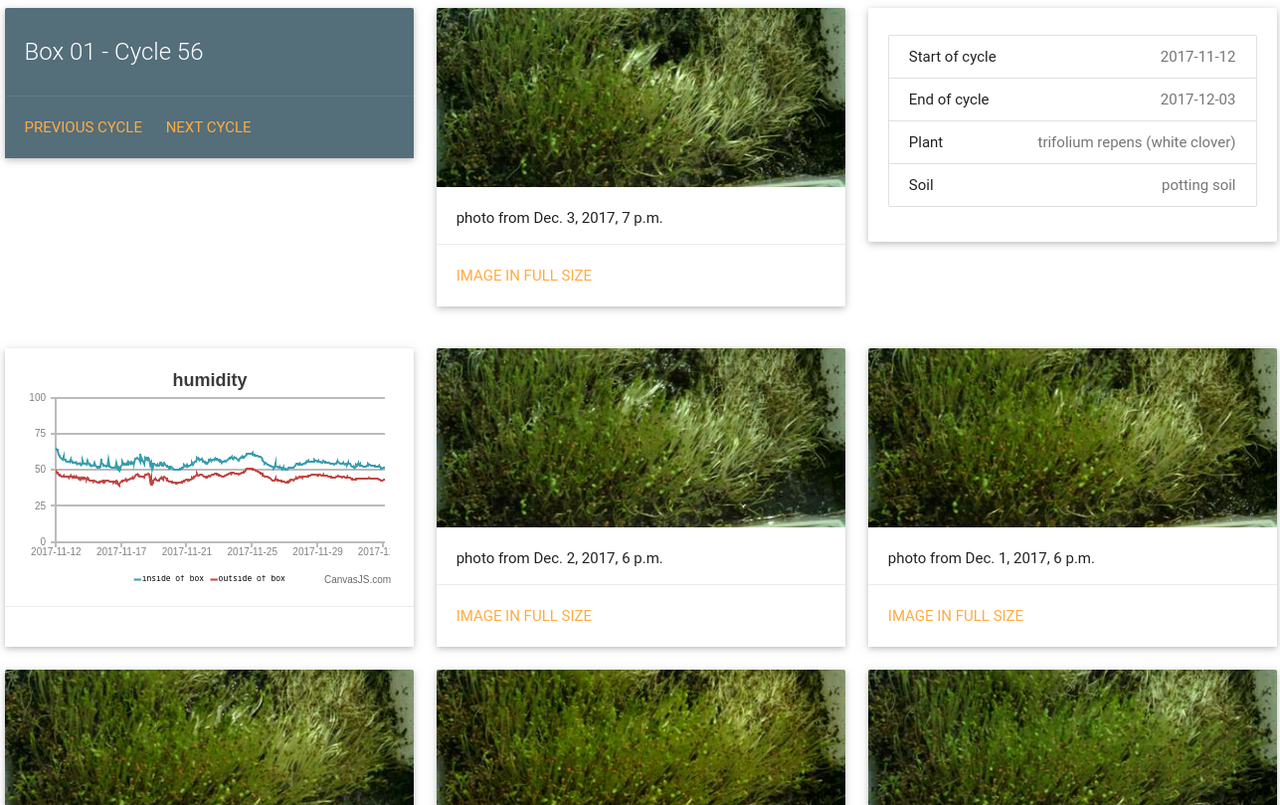

Every box had a DHT22 for temperature and humidity inside the box and one outside the box. After the first weeks we added fans to exchange air between the outside and the inside of the box. This was very important against mold on the plants. We used very cheap pumps that can be operated by a Raspberry PI. The timing was tricky because you cannot control the amount of water – only the time the pump is powered.

We measured the moisture of the soil in the first iteration by very cheap metal sensors that measure conductivity between them. Later we used capacitive sensors additionally.

To get the best possible photos we enabled a small 12V LED before taking the photo and disabled the LED afterwards.

Image of the setup from above:

In the final iterations (the last three months) we experimented with light - more like the absence of light. The cress is still growing without any sunlight, but tastes different and is more yellowish than green.

Software

The Raspberry PIs pushed every photo and every sensor value to a REST-api. The website was build with Python in the Django webframework.

The cress.space website showed an image from every day of the plants growing. Here is the last days of a cycle growing white clover:

The code of the website is archived on https://github.com/aerospaceresearch/cress-website.